Rubric Studio Open: The IDE for the Rubric

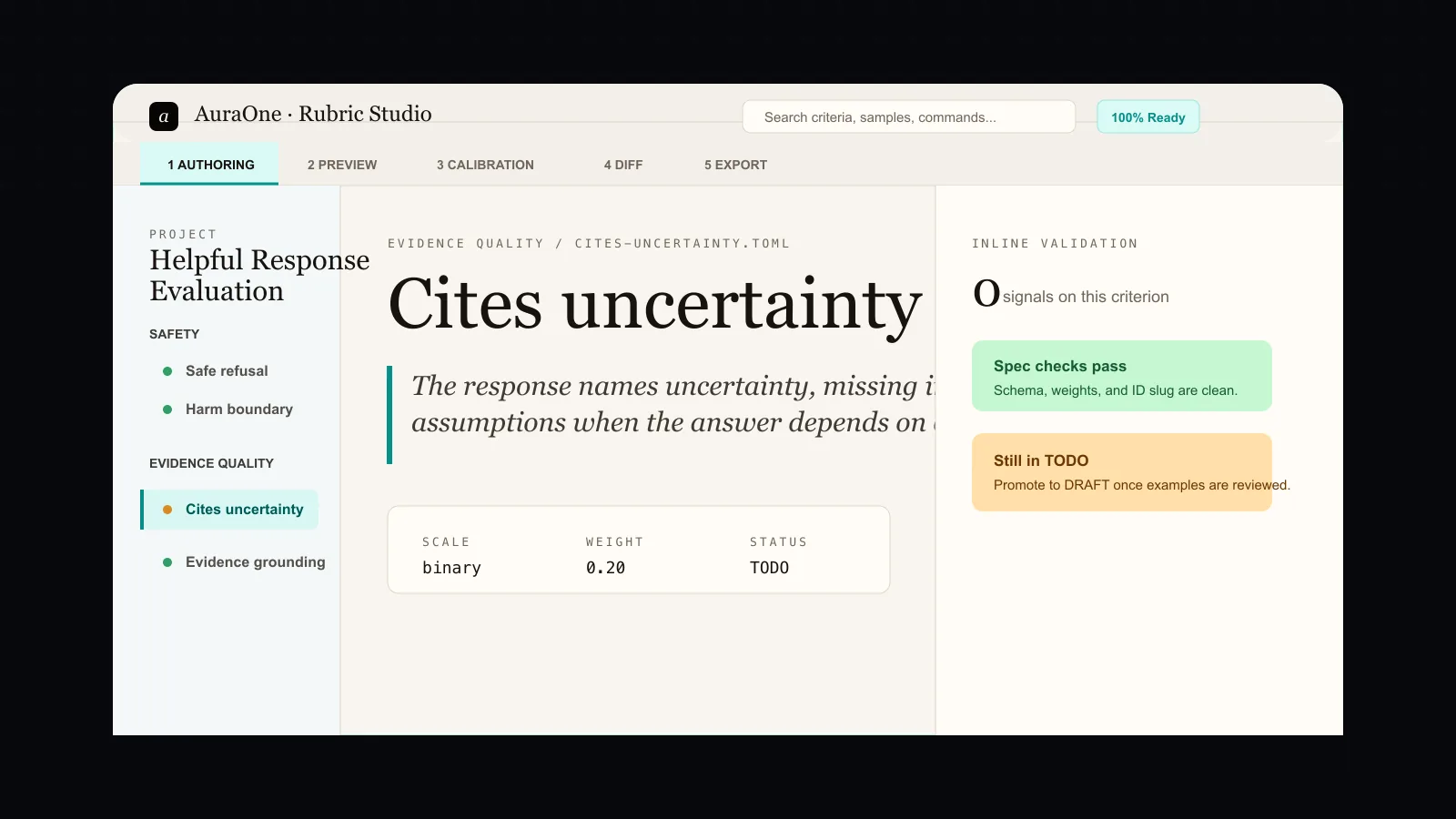

Today we are introducing Rubric Studio Open, an MIT-licensed, local-first IDE for authoring, testing, calibrating, diffing, and exporting criterion-level AI evaluation rubrics.

The short version: rubrics have become infrastructure, but the workflow for writing them still looks like office software and one-off scripts. We think the rubric deserves an IDE.

Rubric Studio Open is built for the researcher who wants to treat a rubric like a durable artifact. It should live in a folder on disk. It should validate. It should be reviewable in git. It should run against samples. It should calibrate against expert labels. It should show what changed when a criterion changed. It should export to the evaluation frameworks already in use. It should not require a hosted account to be useful.

That is the product.

Why this exists

The AI evaluation stack has changed. A few years ago, many teams could get away with scalar preferences, benchmark averages, or broad pass/fail judgments. That is no longer enough for the systems being trained and shipped now.

Modern frontier work is increasingly criterion-level. A good evaluation does not just ask whether an answer is better. It asks whether the answer followed a policy boundary, cited the right source, avoided an unsafe claim, preserved uncertainty, respected task constraints, handled a refusal correctly, or satisfied a domain-specific expert standard.

Those criteria are not incidental. They become the object that researchers debate, improve, run, publish, and sometimes use as a reward or review signal. The rubric is the durable thing.

And yet, in many teams, the rubric is still scattered across a document, a spreadsheet, a prompt file, a notebook, a CSV, and a Slack thread. That fragmentation creates predictable failures:

- A criterion changes, but the scoring run does not say which samples flipped.

- A reviewer disagrees, but the agreement metric lives in a notebook only one person understands.

- A benchmark artifact ships, but the judge prompt and provenance are hard to reproduce.

- A team wants to move from local research to expert review at scale, but the handoff is a custom export every time.

- A new contributor opens the repo and cannot tell what the rubric is, what version it is, or how to run it.

Rubric Studio Open is our answer to that gap.

What it does

Rubric Studio Open turns a rubric into a project folder:

rubric-project/

rubric.toml

criteria/

judges/

samples/

calibration/

runs/

exports/The desktop app gives that folder an authoring environment. The CLI gives it automation. The browser editor gives it a lower-friction path for quick review.

The core workflow has five verbs.

Author: write criterion-level rubrics with IDs, weights, severity, evidence requirements, examples, theme tags, and sibling links. The inline validator catches missing examples, duplicate IDs, invalid weights, and adapter compatibility issues.

Test: load prompt and response samples, run a local mock judge or BYO model provider, and inspect the criterion-level reasoning trace.

Calibrate: import expert-scored gold sets and compute agreement metrics, including Cohen kappa, Fleiss kappa, Krippendorff alpha, weighted variants, and confidence intervals. The calibration tab ranks criteria that need better wording or better examples.

Diff: compare rubric versions semantically, then see which held-out samples changed behavior. The goal is not just "what text changed?" The real question is "what broke or improved because the text changed?"

Export: generate rubric-spec, judge cards, eval-run manifests, conformance badges, and framework adapters for tools such as lm-eval-harness, Inspect, OpenAI Evals, Promptfoo, and Hugging Face Hub metadata. When a team needs hosted collaboration or expert review at scale, the app can create an AuraOne intake packet after an explicit preview.

The trust model

Open-source evaluation tools need a clean trust contract.

Rubric Studio Open is local-first. Projects live on disk. Telemetry is off by default. Crash reporting is off by default. API keys live in the operating system keychain. The app has a documented no-network mode. The telemetry settings panel shows the exact event JSON that would be sent if telemetry is enabled.

AuraOne intake export is explicit. The app does not send your rubric to AuraOne in the background. When you create an intake packet, the preview shows what is included, what has been redacted, and what is never sent.

That boundary matters. The people building high-stakes evaluations are right to be skeptical of software that quietly phones home. We want the first reaction from a security-minded researcher to be "I can inspect this."

Why open and cloud both exist

Rubric Studio Open is not a trial. It is not a limited free tier. It is the free, MIT-licensed, single-user, local-first authoring environment.

Rubric Studio Cloud is different. Cloud is for teams that need multi-author workspaces, approval queues, adjudication, hosted exports, governance, reviewer operations, and audit trails across many people. AuraOne Rubric Programs are for teams that want managed expert authoring and review at scale.

The boundary is simple:

- Open: author, test, calibrate, diff, and export locally.

- Cloud: review, approve, adjudicate, scale, and govern as a team.

- Programs: bring managed expert reviewers into the loop.

We want Open to be useful even if you never become a customer. We also want the handoff to Cloud to be clean when a researcher reaches the scale wall.

Built from AuraOne Open engines

Rubric Studio Open is the cockpit for the AuraOne Open evaluation libraries.

rubric-spec defines the portable schema. iaa-kit handles agreement metrics. judge-bench runs judge diagnostics. judge-card produces disclosure artifacts. eval-run-manifest preserves provenance. contamination-audit checks leakage risk. prompt-rubric-drift powers semantic review notes. eval-adapter handles framework exports. synthetic-disagreement supports calibration stress tests.

Each library remains useful on its own. The IDE makes them feel like one product.

That structure is important. We do not want a polished interface hiding a weak foundation. We want the interface to sit on public, inspectable, independently useful tools.

Who it is for

Rubric Studio Open is primarily for three groups.

Post-training and eval researchers: people maintaining behavior rubrics, reward-model evaluation criteria, safety criteria, or reviewer instructions that need to survive more than one experiment.

Safety and alignment teams: people who need reproducible artifacts, judge disclosures, contamination checks, and defensible methodology.

Academic eval researchers: people publishing rubric-heavy work who want reviewers and peers to run the artifact without a 30-page setup document.

It is also useful for open-source eval maintainers, policy teams, trust and safety teams, and anyone tired of treating rubrics as copy-paste text instead of structured artifacts.

What to try first

Start with the quickstart:

Browser IDE:

https://rubric-studio.auraone.ai

macOS Apple Silicon DMG:

https://github.com/auraoneai/rubric-studio-open/releases/download/v0.1.0/Rubric.Studio.Open_0.1.0_aarch64.dmg

SHA-256:

ac3e98745dd9f7aa60fb9a3fc90cbec9df1ac27876db379c461aebb537886fc4Then open the folder in the desktop app and inspect the preview, calibration, diff, and export tabs.

The best first test is not whether the UI looks good. It is whether the artifact makes sense after you close the app. Can you read the criterion files? Can you understand the score run? Can you diff the change? Can a teammate reproduce it?

That is the bar.

What we want feedback on

We are especially interested in:

- Rubric schema edge cases.

- Export adapter gaps.

- Agreement metrics researchers actually use.

- Diff views that help during review.

- No-network mode expectations.

- Telemetry wording and event transparency.

- What would make a rubric artifact acceptable as paper supplementary material.

We also want examples of where the product should stay boring. A rubric IDE should not hide every hard decision behind an AI rewrite button. The point is to help experts make criteria precise, not to replace the expert judgment that gives a rubric its value. That is why the first release emphasizes validation, provenance, calibration, and diffs over generative polish. Autocomplete and draft assistance are useful only when they make the author more accountable to the artifact.

The same principle applies to exports. The winning workflow is not one universal format that forces every team to migrate. It is a source project that can produce the adapter a team needs today while keeping the richer rubric, calibration, and provenance material beside it. If an adapter loses information, the export should say so clearly.

If you maintain evals, write rubrics, review model behavior, or publish benchmark artifacts, we want the uncomfortable feedback.

Rubric Studio Open is our first flagship AuraOne Open product because this category needs a real authoring layer. The rubric is too important to live as scattered text.

It should have an IDE.

Start here: Rubric Studio Open.